Our very own Ryan Basdeo recently presented at the PSG 2026 conference to discuss the evolving role of Artificial Intelligence (AI) in the pharmaceutical industry. As digital technologies continue to transform manufacturing, this discussion explored the opportunities and challenges associated with bringing AI into GxP regulated environments, specifically for the visual inspection of injectable drug products.

With over sixteen years of experience in the field, Ryan contributed practical insights on how to shift AI from the mystery of a “black box” into a fully transparent, audit-ready system.

Here are some key takeaways and considerations when deciding to integrate AI into your visual inspection processes:

The Limits of Traditional Automation Visual inspection is a critical step to ensure injectable batches are free from visible particulates and container closure defects. While traditional Automated Visual Inspection (AVI) machines provide consistency and handle thousands of units per hour, they rely on rigid, rule-based logic programmed by humans. This rigidity struggles with normal product variability and often leads to costly false rejection rates, particularly for difficult-to-inspect products (DIPs) like lyophilized cakes, low fill vials, and IV bags.

Deep Learning Mimics Human Pattern Recognition AI overcomes the performance limits of traditional automated inspection through supervised learning. By feeding the algorithm thousands of images labeled by human experts, the machine does not just memorize images; it builds strong decision logic based on data. This allows the system to recognize nuanced patterns—much like a human inspector—boosting production yield while strictly reducing defect tolerance.

The Three Pillars of AI Compliance To confidently establish a qualified, audit-ready state for AI in manufacturing, organizations must focus on three critical compliance pillars:

- Data Governance: The model is only as good as the information it learns from. Data must be reliable, representative, and strictly split into training, tuning, and testing sets to ensure unbiased learning.

- Transparency (Explainable AI): To satisfy regulatory requirements, Quality teams must understand why an AI made a decision. Explainable AI (XAI) utilizes visual tools like heat maps to highlight the exact pixels that triggered a rejection, proving the model detected a real defect rather than background noise like a shadow or reflection.

- Control: A compliant system must be deterministic, meaning it meets acceptance criteria every single time. This is achieved by “freezing” the model so it stops adapting during live operations, and by utilizing Human-in-the-Loop (HITL) oversight to manage risks and unexpected edge cases.

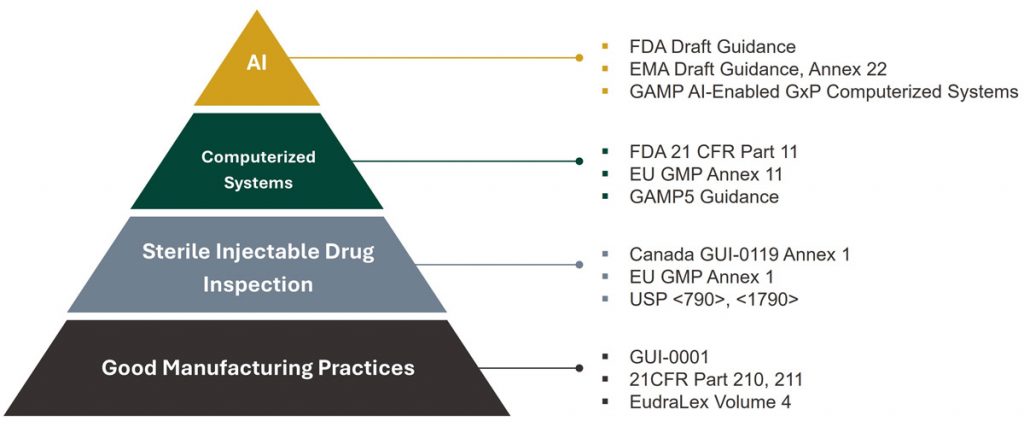

The SAGE Perspective In our experience, AI is not a completely new regulatory hurdle, but rather a new layer being added to the robust, pre-existing compliance frameworks the industry has relied on for decades. Implementing AI successfully is a multidimensional challenge that requires bridging the gap between data scientists, vision experts, and quality specialists.

The long-term trajectory for pharmaceutical manufacturing certainly includes smarter, proactive AI integration—ultimately pushing toward the vision of Pharma 5.0. As an engineering partner, SAGE is here to support organizations as they navigate these technological leaps—from foundational AI strategy through to detailed testing and validation. If you’re considering how to scale or automate your visual inspection processes using AI, we’d be happy to discuss how we can help.